The Ethics of Artificial Intelligence: Can Machines Make Moral Decisions?

- keystone keystone

- Sep 29, 2025

- 5 min read

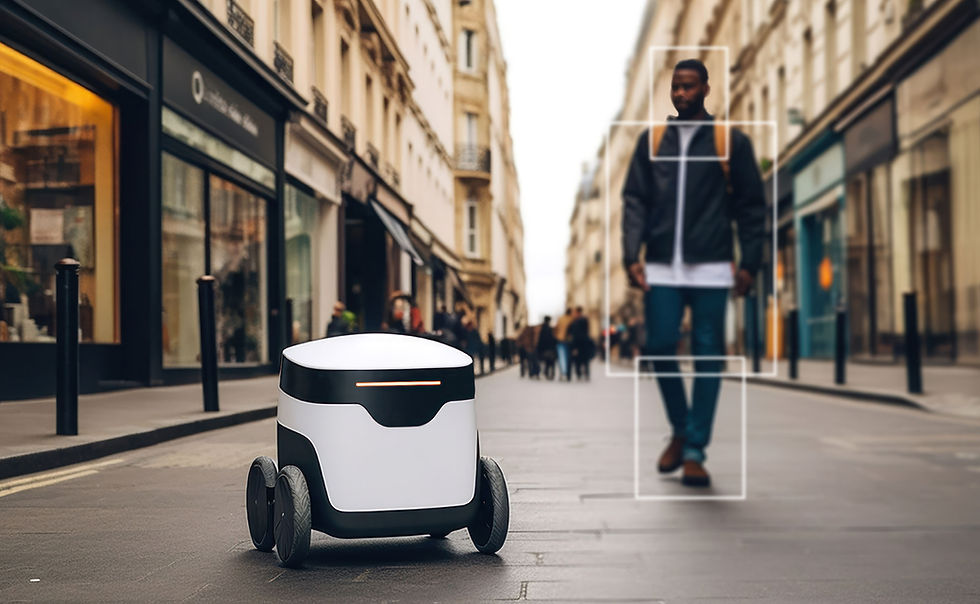

As artificial intelligence (AI) continues to evolve, its presence in decision-making processes is becoming increasingly widespread — from autonomous vehicles to judicial risk assessments. This shift raises an essential ethical question: Should machines be allowed to make moral decisions? And if so, must they be held to the same standards of morality as humans?

Moral Judgement and the Machine Mind

Machines, unlike humans, do not possess emotions, empathy, or consciousness — qualities traditionally seen as essential for moral reasoning. While AI systems can process vast quantities of data and simulate decision-making based on statistical probabilities, it is questionable whether they can truly understand the consequences of their actions in the human sense.

Some ethicists argue that it is essential that moral agency remain human. They state that if machines are entrusted with moral decisions, society might face a dangerous erosion of personal responsibility. One researcher recently stated that “AI systems are designed to follow patterns, not principles,” suggesting that true moral judgement may be beyond algorithmic comprehension.

The Question of Accountability

A central dilemma is accountability. If an AI makes a harmful decision, who should be held responsible? The developer, the user, or the machine itself?

It has been suggested that, in scenarios involving autonomous vehicles, the manufacturer must accept legal and ethical responsibility. However, others argue that society should not rely too heavily on punitive measures when the AI has acted based on its programmed logic.

In a recent debate, one expert pointed out that “we should not confuse the appearance of intelligence with moral insight,” and added that any delegation of ethical responsibility to machines should be treated with extreme caution.

Bias and the Illusion of Objectivity

Another significant issue is bias. Despite popular belief, AI is not inherently neutral. It learns from human-generated data, which can reflect and even amplify societal prejudices. This creates a paradox: we might trust AI for its supposed impartiality, yet it may reproduce the very biases it was intended to avoid.

It is essential that we critically examine not just what AI can do, but what it ought to do. Should AI be used to decide who gets a loan or a job interview? If the training data is biased, even the most sophisticated system might reinforce existing inequalities.

A Cautious Future

In conclusion, while AI can simulate decision-making with remarkable speed and consistency, it must not be mistaken for a moral agent. Machines operate within the frameworks given to them — they do not possess values, nor do they comprehend the full weight of ethical consequences.

It is vital that human oversight remains central. AI might support ethical decision-making, but it should not replace it. The moral responsibility lies with those who design, deploy, and regulate these systems.

💬 Discussion Questions (C1 Level)

Should AI ever be given full autonomy in making moral decisions? Why or why not?

In what ways might machine learning reinforce social biases?

Who do you believe should be held accountable when an AI system makes a harmful decision?

Can a machine truly understand concepts like empathy, fairness, or justice?

It is often said that AI is objective. Do you agree or disagree with this statement? Support your answer.

📚 Advanced Vocabulary List

Word / Phrase | Definition |

Morality | Principles concerning the distinction between right and wrong behavior. |

Accountability | Responsibility for one's actions and the obligation to explain them. |

Bias | A prejudice or predisposition toward one group or idea, often in an unfair way. |

Consequence | The result or effect of an action or condition, often negative. |

Dilemma | A situation in which a difficult choice has to be made between two or more alternatives. |

It is essential that | A formal phrase used to express necessity or strong recommendation. |

Arguably | Used to express an idea that could be debated; open to argument. |

Subjunctive mood | A grammatical structure used to express doubt, necessity, or hypotheticals (e.g., It is vital that he be informed.). |

✅ Subjunctive Mood – Grammar Questions (C1 Level)

Part A: Multiple Choice (Choose the correct option)

It is essential that every citizen ____ access to unbiased information.

a) has

b) have

c) will have

d) had

The committee recommended that the developer ____ the AI algorithm to reduce bias.

a) changes

b) changed

c) change

d) had changed

If it were up to me, I would insist that the system ____ more transparent.

a) be

b) is

c) was

d) being

It is crucial that the ethical guidelines ____ before any AI system is deployed.

a) establishes

b) be established

c) are established

d) was established

The professor suggested that students ____ the ethical consequences of AI decision-making

a) considers

b)consider

c)considered

d)had considered

Part B: Error Correction

Find and correct the error in each sentence.

It’s vital that the government enacts strict regulations on AI.

The manager requested that the data was reviewed thoroughly.

The expert insisted that the algorithm is modified to prevent discrimination.

It’s important that she attends the ethics seminar.

They demanded that the results are published publicly.

Part C: Sentence Completion (Open-ended)

Complete the following sentences using the correct subjunctive structure.

It is essential that the AI system __________ (to follow) ethical guidelines.

The researchers recommended that the machine __________ (to not process) sensitive data.

I suggested that he __________ (to be) more cautious when discussing moral dilemmas.

It is crucial that the policy __________ (to reflect) public values.

The ethicist proposed that AI developers __________ (to undergo) formal training in ethics.

Answers

✅ Part A: Multiple Choice

b) have→ It is essential that every citizen have access... (subjunctive)

c) change→ ...recommended that the developer change the AI algorithm...

a) be→ ...insist that the system be more transparent.

b) be established→ ...that the ethical guidelines be established...

b) consider→ ...students consider the ethical consequences...

✏️ Part B: Error Correction

❌ enacts → ✅ enactIt’s vital that the government enact strict regulations on AI.

❌ was reviewed → ✅ be reviewedThe manager requested that the data be reviewed thoroughly.

❌ is modified → ✅ be modifiedThe expert insisted that the algorithm be modified to prevent discrimination.

❌ attends → ✅ attendIt’s important that she attend the ethics seminar.

❌ are published → ✅ be publishedThey demanded that the results be published publicly.

✍️ Part C: Sentence Completion (Suggested Answers)

It is essential that the AI system follow ethical guidelines.

The researchers recommended that the machine not process sensitive data.

I suggested that he be more cautious when discussing moral dilemmas.

It is crucial that the policy reflect public values.

The ethicist proposed that AI developers undergo formal training in ethics.

Comments